Mr. Steven Kopits takes issue with the Harvard School of Public Health led study’s point estimate of (4645) and confidence interval (798, 8498) for Puerto Rico excess fatalities post-Maria thusly:

Does Harvard stand behind the study, or not?

That is, does Harvard SPH believe that the central estimate of excess deaths to 12/31 is 4645, or not? Does it stand behind the confidence interval, or not? Is there still a 50+ probably that the death toll comes in over 4600? If there is, then the people of PR need to start looking for the 3,250 missing or the press needs to assume PR authorities are lying. Those are the implied action items.

Or should we just take whatever number HSPH publishes in the future and divide by 3 to get a realistic estimate of actual?

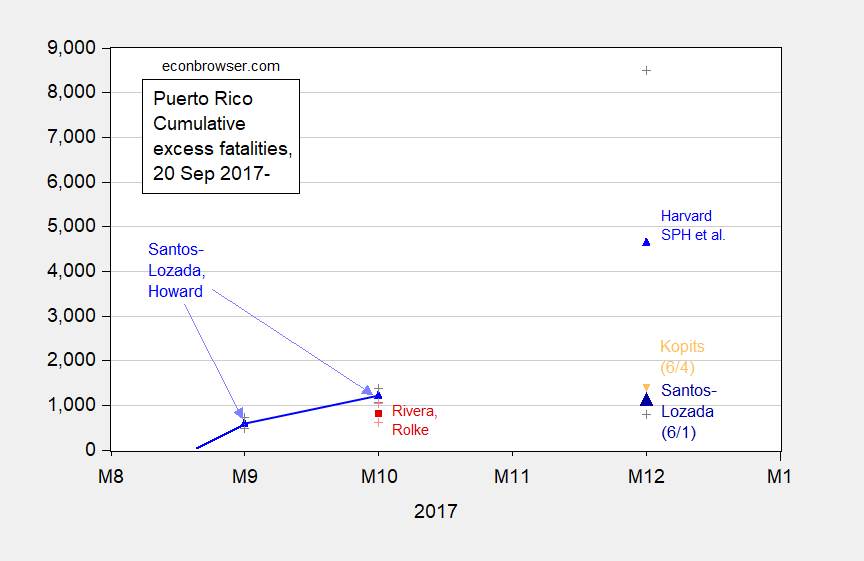

Let’s show a detail of the graph previously displayed (in this post):

Figure 1: Estimates from Santos-Lozada and Jeffrey Howard (Nov. 2017) for September and October (calculated as difference of midpoint estimates), and Nashant Kishore et al. (May 2018) for December 2017 (blue triangles), and Roberto Rivera and Wolfgang Rolke (Feb. 2018) (red square), and Santos-Lozada estimate based on administrative data released 6/1 (large dark blue triangle), end-of-month figures, all on log scale. + indicate upper and lower bounds for 95% confidence intervals. Orange triangle is Steven Kopits estimate for year-end as of June 4. Cumulative figure for Santos-Lozada and Howard October figure author’s calculations based on reported monthly figures.

The middle paragraph shows a misunderstanding of what a confidence interval is. The true parameter is either in or not in the confidence interval. Rather, this would be a better characterization of a 95% CI:

“Were this procedure to be repeated on numerous samples, the fraction of calculated confidence intervals (which would differ for each sample) that encompass the true population parameter would tend toward 95%.”

In other words, it is a mistake to say there should be a 50% probability that the actual number will be above the point estimate. But that is exactly what Mr. Kopits believes a confidence interval means. He is in this regard incorrect. From PolitiFact:

University of Puerto Rico statistician Roberto Rivera, who along with colleague Wolfgang Rolke used death certificates to estimate a much lower death count, said that indirect estimates should be interpreted with care.

“Note that according to the study the true number of deaths due to Maria can be any number between 793 and 8,498: 4,645 is not more likely than any other value in the range,” Rivera said.

Once again, I think it best that those who wish to comment on estimates should be familiar with statistical concepts.

Why don’t you add a line for the official PR statistics?

An earlier post was entitled “Please, Do Not Comment on This Post Unless You Understand the Following Terms”. One of those terms was confidence interval which you clearly do not understand. And yet you comment anyway? Me thinks your fifteen minutes of fame expired a long time ago.

steven, i would take this as a learning opportunity. you created an argument against the harvard study, and used a misunderstanding of statistics as the basis for such an argument. in essence, you placed false words (intentional or not) into the mouths of the harvard investigators because you failed to properly understand confidence intervals and the implications of their meaning. this amounted to a strawman argument on your part. as i stated in a previous post, you owe the harvard investigators an apology.

I said that the Harvard number was ‘wildly exaggerated’, which the Puerto Rican authorities confirmed two days later with actuals.

Now, if you want to challenge the PR numbers, by all means, go ahead.

steven, that is exactly the point. you are using “actuals” with authority and criticizing another report. how confident are you that these “actuals” are correct? at best, they represent a lower bound. the survey data was an active collection. the “actuals” was a passive collection, waiting on bodies to arrive for review. you can fault the harvard report by saying the small sample size produced an confidence interval that was too large to be of value-and i would not disagree with that angle. but to call it garbage while you apparently consider the “actuals” pristine is not very fair. the harvard folks went into the jungle to obtain their results. you are sitting in an air conditioned chair and saying they did not go far enough into the jungle. for a phd who should understand the difficulties of field research, that is a pretty poor position to take.

baffling: Just for clarification, Mr. Kopits does not have a PhD, according to his bio.

“Mr. Kopits does not have a PhD”

And?

As most people with PhD have issues to deliver a useful interpretation of statistical results I would give a damn. 🙂

It is not by chance that we have publications like:

http://www.ohri.ca/newsroom/seminars/SeminarUploads/1829%5CSuggested%20Reading%20-%20Nov%203,%202014.pdf

Ulenspiegel: I mentioned that Mr. Kopits had no PhD because baffling asserted that a PhD should understand the difficulties of field work. In that sense, my comment was exculpatory.

Menzie is correct. On the other hand, we talking basic statistics for a publication like this and the error is not in the statistical analysis.

“Note that according to the study the true number of deaths due to Maria can be any number between 793 and 8,498: 4,645 is not more likely than any other value in the range,” Rivera said.

I believe this is incorrect if you assume a normal distribution. The mean literally is the most likely outcome compared to any other in the distribution. And values near the mean are far more likely than values far from the mean. Usually, when social science researches are talking about confidence intervals, one assumes they are using a normal distribution. Was the assumption different in this case? I didn’t see it in the study.

“Usually, when social science researches are talking about confidence intervals, one assumes they are using a normal distribution.”

Did you just finish your copy of Statistics for Dummies or what? Yes in the beginning most Stats 101 lectures assume normal distributions to make it easy for the freshman students. But something tells me we are in store for another one of Menzie’s post where he schools you on the basics.

I was a stats TA in graduate school.

I pity the students.

I’d put roughly the same probability of that being a fact as “Princeton” Kopits having any ties to Princeton U, or really just about anything he types or says.

Do you think a man who doesn’t understand the basic concept of a confidence interval was a TA for stats?? That’s laughable.

A person might forget a differential equation after being away from math or stats a long time. But not remembering or being able to “brush up on” what a confidence interval is?? If “Princeton” Kopits had the intelligence of a middle school student he could have at least reacquainted himself with the basic terminology before putting multiple false statements in his blog comments.

Steven, had the assumption been different from a normal distribution would they have still selected the mid-point in their release? Dunno. I’m just an old analyst who likes a little better precision with my morning coffee.

Baffled, pgl, Menzie and any others wishing to support this study, citing a range as large as Harvard’s add’s little value to an estimate. Its value lies in the attempt produce an estimate using a different approach. Academic interest does not exceed the study’s value to provide a true value.

Remember, Menzies position has been that the CI is important because???? It shows: “The true parameter is either in or not in the confidence interval. Rather, this would be a better characterization of a 95% CI” Admittedly, that is an estimate better than ZERO and 75% the total population of PR, but not that much better.

What can the Harvard estimate be used for in the REAL WORLD? Baffled, pgl, Menzie that is not a rhetorical question. Remember, your answer could determine the value of the Harvard Study. If you need a range (non-CI) your answers could support the value from “Garbage” to “Great Significance”, and guess who will be the judges. Not Steven and I but the PR and US administrations and tax payers.

You could have a weird distribution where the central estimate was not the most likely. But this is social science, not rocket science. With confidence intervals, unless the researchers make some explicit statement to the contrary, one would assume they use a normal distribution where the central estimate (mean) is the most likely single answer and the probabilities are 50/50 higher or lower.

When are you and Steven published your text on Statistical Analysis? I’ll make sure to save my money for something actually worth buying.

Pgl, personally, I’d be afraid to touch anything of yours for fear the angry ridicule is catching. OTH, to show your analytical prowess, you could just answer my question: What can the Harvard estimate be used for in the REAL WORLD? Baffled, pgl, Menzie that is not a rhetorical question.

I guess you think massive deaths in Puerto Rico is not the REAL WORLD. Well dude it is – and the House may have a 9/11 style hearing on this issue that you clearly could care less about.

I’ll wait, not holding my breath, for your answer. Deflection is not an answer.

CoRev: I believe part of the motivation for the study was to check on the likelihood that 64 fatalities from Maria made sense. The confidence interval did not bracket that number, so the researchers concluded that 64 was an undercount. Had they known about Mr. Kopits’s range of 200-400, they would have said that was an undercount as well. So in that sense, they achieved what they said they had set out to do.

Now as for judges, I’ll note US taxpayers shouldn’t have any more right to judge than Joe Blow, given that we know of no US government monies being used to support the analysis. The report was judged in the sense of being peer reviewed, which is more than what can be said for several of the other assessments (I use the word loosely) floating around.

Menzie, …”part of the motivation for the study was to check on the likelihood that 64 fatalities from Maria made sense….So in that sense, they achieved what they said they had set out to do.” Yup! Few PR officials actually believed the “official” number. “San Juan Mayor Carmen Yulín Cruz told CNN on November 3 that she thought the toll could be 500.” https://www.cnn.com/2017/12/09/us/puerto-rico-hurricane-deaths-and-assistance/index.html So they just confirmed what most already knew, except for the responsible official who was restricted by the process.

Both Steven and the PR authorities changed their estimates after newer and better data was released. Moreover, the PR authorities admitted several times before and after the Harvard study was released that the 64 death estimate was low. That was confirmed by the other studies released well before Harvard started their study showing many times that 64 death estimate.

The only motivation I could find in the study and the press release was a desire to have an estimate independentfrom the PR official numbers. Ok! They succeeded and achieved their desired goal.

As for your comment regarding being judged, I remember that being associated with the value of Harvard’s study in developing policy and legislation, where the tax payer is surely a major judge.

Now, let’s talk about your motivation in writing 6 articles trying to show this study had value other than an academic exercise in providing an non-requested independent estimate. You have yet to define any value to the Harvard estimates. The original motivation of clarifying the 64 deaths as probably wrong was well known and accepted before they even started. Why have you singled out those who questioned the value of this study’s estimates? What is its value outside the academic environment?

Menzie you wrote in part;

“I believe part of the motivation for the study was to check on the likelihood that 64 fatalities from Maria made sense”

Official statistic are slow to gather, the motivation was easy. Get there before the standard bureaucratic gathering so as to achieve publicity, And as I write before, the time and money spent saved zero lives. That waste of time and energy that could have better spent to achieve saving of lives and relief of misery. The Harvard people have no regard for human life except to publicized it for their own glory.

If statistics were the important thing, the study could have been done later, and achieve better results. But the study was less important than the publicity

Ed

“‘If statistics were the important thing, the study could have been done later, and achieve better results“

Ed, survey data would not be expected to get any better the longer you delay collection. Your complaints are unfounded. You are simply jealous and spiteful of the Harvard folks. Did they deny you admission in the past? You are simply acting like a snowflake.

There is a distinction between causality and attribution. The 64 is causality. Those died directly from the hurricane.

The rest is attribution. Those people did not die from the hurricane, but principally from a sustained loss of power. Now, did they die due to the hurricane; the bankruptcy, mismanagement and decades of state-ownership at PREPA; or from a lack of sufficiently determined support from the mainland? That’s not a question of causality, but attribution.

According to Table S8 of the study, here are the causes of death for those surveyed

Causes unrelated to the hurricane 12

Interruption of necessary medical services 12

Other reason 9

Medical complications from an injury, trauma, or illness

directly due to the hurricane 3

Trauma (other) 1

Suicide 1

Trauma (vehicle accident) 0

Trauma (building collapse) 0

Trauma (landslide) 0

Drowning 0

Fire 0

Electrocution 0

Note that exactly zero were direct victims of the hurricane. And note further that 40% of deaths can be attributed to the hurricane, even though excess deaths were only 13% of total deaths for the period. You might imagine they’re struggling a bit at Milken now.

12 authors and peer review? And not one of them could see a 3x miss at 60 days coming right at them?

Wow.

Why would you assume a normal distribution. I do not know the upper bound, but the lower bound cannot be below zero. And unless people lie on the survey, there must be a lower bound already defined for the distribution. If I know there are 5 confirmed deaths, there is zero probability I can get a lower death count. I believe the paper assumed a Poisson distribution, which only becomes normal under large n? And I think we have already discussed the idea that n is not large in this survey. Assuming a normal distribution for number of deaths does not seem correct. Those of you in the statistics field can better correct me on this, I hope.

So, HPSH conducts a survey of 4,000 households who claim that Menzie Chinn is 17.5′ tall, with a 95% confidence interval of 1′ to 34′.

The New York Times, WaPo and every other newspaper runs the headline: Incredible Academic 17’6″ Tall!

Now, a non-height analyst looks into the press and see a report that the NYT quoted the Dean of UW as stating that he passed Menzie in the hall and thought Menzie was “maybe 5’10” at the end of October”. So Menzie grew 12′ in two months!

Now, that analyst is inclined to think that given the rather incredible statements about Menzie, he would expect to see a reconciliation of Menzie’s purported height in the study to the last known actual — which is in fact referenced in the study! How do you reconcile that? Maybe the Dean was wrong. Maybe he didn’t see Menzie, maybe he was too far to make a good assessment. Maybe the science labs developed something new which gave Menzie a growth spurt. But, golly, it would be nice if the report made some sort of effort at reconciling two vastly conflicting data points.

Given that this was not done, or seemingly even attempted, the analyst calls the study ‘garbage’ because he just doesn’t see how Menzie could have grown 12 ft without some sort of explanation.

And then, at the end of the school year, the basketball coach saw Menzie shooting hoops with the basketball team (unquestionably worth the price of admission), and the coach — something of an expert in height — suggests Menzie “probably wasn’t much over 6 feet.”

So now, the analyst turns back to HSPH and asks — since we still have not actually measured Menzie — “Do you stand by your original mean estimate and confidence interval?” Because, when the analyst read the report, he assumed that HSPH was using a normal distribution with the usual 1.96 std deviation which translates into a 95% confidence interval, with the mean the most likely outcome with an equal chance of the actual turning out higher or lower. So does HSPH still think it most likely that Menzie is 17.5 feet tall? Because if you do, we should all buy tickets to visit Madison to see something you won’t see anywhere else.

And if you don’t, what in god’s name made you think Menzie would grow 12 ft in two months?

Are you a basketball coach? Being from Brooklyn, I’m sure I can pull a team together to take yours own. We will even spot you 50 points and still win!

Of course this thread is not about sports – it is about the massive number of people who died needlessly. But go ahead – dismiss this with your amateur attempts at being a statistician.

Pgl, massive is how large? If you don’t show your work at least show your source.

Sum 9/11 and Katrina and tell me that this is not large. Oh wait – preK arithmetic may be hard for you. Hint – take off your shoes so you can count to 20!

I see you are still deflecting. Answer the “simple” question. If you can

I see you still have added credibility to the Harvard mid-point number. Even Menzie won’t do that, past insisting it might be credible because it is within the CI. But so then is 8,000, and that is a massive number.

“I see you are still deflecting. Answer the “simple” question. ”

I would define 1000 deaths as large. But I guess you would weight this by race. Maybe it would take 100,000 Puerto Ricans to equate to 1000 white people in your world.

OK – I answered your stupid question. Now move out of the way without your totally uncaring attitude.

Pgl, thank you that wasn’t so hard, was it. we agree, a thousands deaths from a singles storm is a disaster. Now answer the other questions. I’ll repeat them just so you don’t forget: 1) What can the Harvard estimate be used for in the REAL WORLD?

2) You’ve been given the opportunity to suggest how the response could have been better. Show us!

The MPR study has twelve (twelve!) authors, including 4 MDs and 2 PhDs, and not a one of them can extrapolate a simple graph out two months! Is there not one person on this team who can sense check a report? Did not one person on this team understand that this will be a visible, politicized study the findings of which will come under a microscope?

Where is the senior manager on this report who says, “You know, those are great findings guys, and I know you all worked hard on this. But here’s the thing. If we publish this, every major news outlet in the country will run it, and a number will use it to impugn the President and the conduct of the Republican Party. So, by all means, let’s run it. But before that, I need a reconciliation of our findings to the latest actuals. And I want someone to call up the PR governor’s office and let him know this is on its way, to give them an opportunity to comment before it hits the streets. What we absolutely, positively want to avoid is a big splash up front, after which our numbers are exposed as untenable. If that happens, no Red state conservative will ever trust us again, and we will lose our credibility in Congress. And let’s be clear. For the public health system, our credibility is paramount, because we may need funding or support from the President or Congress on short notice with high stakes. And we may need for them to grant that essentially on our credibility, because they trust our competence and motives. The last Ebola outbreak was a case in point.

“So, let’s remember we are in the public health business, not in politics. We don’t have to be popular. But we do have to be courteous. And most of all, we have to be right. I’d rather we caveat the hell out of the findings if we have to. So, show me our numbers against the actuals. Let’s update those with whatever the Puerto Ricans will give us, and let’s make sure we give them an opportunity to chime in before this hits the streets.”

Where was that senior manager on this project?

How much is Trump paying you for this sad hit job? It is not only really disgusting but at the end of the day, it strikes me as stooopid. Maybe Barnes and Noble should refund what you paid for Statistics for Dummies.

His only other “bragging point”, other than dreaming up a connection to Princeton U, is intermingling with Euro-trash (although how much can we believe even that at this stage??) The only credibility I can give to it is, it’s such a poor “bragging point” to use it must be true. Due to the Euro-trash connection, I have always considered “Princeton” Kopits to be the poor man’s Paul Manafort. Maybe “the poor man’s George Papadopoulos” is more fitting.

https://www.youtube.com/watch?v=CX2ikbrh7b4

https://www.youtube.com/watch?v=XfOuHr8jb50

“To the best of my knowledge” is usually what Skeletor prefaces statements with when they ask him when was the last time he visited Castle Grayskull.

https://www.youtube.com/watch?v=T7_K28GZHdo

He says he was a stats TA while in graduate school. I doubt this was Princeton and of course Steven could not be bothered to say which school. Wait – I know. National Review University!

Columbia

@ pgl

“Princeton” Kopits never says does he?? People have a right to some privacy—but if we weigh this with his exertion at self-promotion and a blog title that is bound to create false-impressions, what can we deduce his academic credentials look like?? How many people, say with any kind of a business major from a 4-year accredited University couldn’t express themselves correctly about a confidence interval (among other basic errors)??

“Princeton” Kopits silence on educational background speaks VOLUMES.

@ “Princeton” Kopits

You mean to tell us that no one at Harvard phoned you to be the “Senior Manager”!?!?!?!?! “Princeton” Kopits, this is a travesty!!!! Both me and your mother think you would have “done a hell of a job, Stevie!!!”. But seriously “Princeton” Kopits, all jokes and satire aside, you ever think of working for Trump as the head of FEMA?? I have a feeling they’d be happy to have a man like you, with your, uuuuuhh, “unique qualifications” in the field of…….. statistics….. ?? uuuuuhh….. mathematics……. ?? Uhm, Well….. you’d do a hell of a job Stevie!!!!!

Moses –

I would happily work on illegal immigration. Here are the head-to-heads, Puerto Rico vs Mex / Cent Am migrants, Sept. 20, 2017 to March 30, 2018:

PR deaths direct + indirect from Sept. 20, 2017 – March 30 2018

1600

of which, potentially avoidable with better government intervention: 400?

Mexican and Central American Migrant Deaths and Predation, Sept. 20, 2017 – March 30, 2018

Died in Mexican interior or desert 1,047

Raped 64,364

Kidnapped / Extorted 15,699

Assaulted and Robbed 104,658

Deterred Entrants (Turned Around) 62,795

Extended Incarceration 52,329

Economic migrant drug smuggling 23,548

Forced Prostitution, Labor 11,512

Total 335,951

Of which, attributable to US policy failures 302,356

And we’ve had six Menzie posts on Puerto Rico and exactly zero on migrants.

Steven not only zero on migrants, but I also do not remember a post on the values in latest jobs report. I do remember come carping about Trump mentioning it was about to be released. Even that carping was mostly wrong in claiming it to be illegal, immoral, unethical,and probably an attack on humanity.

@ “Princeton” Kopits

Professor Chinn has had multiple posts on immigration. Just off the top of my poor memory I remember a striking one he did on the Chinese Exclusion Act, and I’m pretty damned sure he’s addressed, both directly and indirectly, Mexican immigrants and Central American immigrants. Maybe why you don’t “remember” Menzie eloquently and persuasively discussing immigration is that Menzie chooses to have an optimistic outlook on humanity’s ability to contribute and benefit each other. See that’s the great irony of 1st, 2nd, and 3rd generation immigrant people to this nation—-for all the pure unadulterated crap they have to withstand from their fellow Americans—they still go on believing EVEN STRONGER that mankind is inherently good, and believing EVEN STRONGER in the synergy we create for each other—Something Menzie’s Mom and Menzie understand so deeply, but it’s looking doubtful at this point the pseudo-intellectual “Princeton” Kopits ever will.

Well, technically, I believe Menzie was born in this country. I am an immigrant.

@ “Princeton” Kopits

You did notice I said 2nd generation immigrant (just guessing in Menzie’s case) and 3rd generation right??

As far as you are concerned, Kopits, I’ve always assumed you were a temporary workers visa immigrant to planet Earth, and any day now would unveil the reasons for your visit. Come on!!! Somewhere back on your home planet there has to be some gorgeous girl waiting on you??

https://www.youtube.com/watch?v=PkL-s7Sskzc

House Democrats seek independent commission on Hurricane Maria deaths:

http://nbc24.com/news/connect-to-congress/house-democrats-seek-independent-commission-on-hurricane-maria-deaths

‘Members of the Congressional Hispanic Caucus announced Wednesday they are preparing legislation to create an independent commission to investigate the federal response to Hurricane Maria, in the wake of new data indicating at least 1,300 more people died in Puerto Rico due to the storm than initially reported. “The evidence is making it clear, Maria may be one of the worst natural disasters in U.S. history,” said Rep. Nydia Velasquez, D-N.Y., at a Capitol Hill press conference. Researchers at Harvard University estimated more than 4,000 Puerto Ricansmay have died as a result of the September 2017 hurricane and the damage it caused. There have been some questions about their methodology, but the Puerto Rico Department of Health released its own updated numbers on Friday, reporting about 1,400 deaths may be related to the storm, significantly more than the 64 deaths announced last fall. The Congressional Hispanic Caucus intends to introduce a bill next week that would create a commission modeled after the one that investigated the Sept. 11, 2001 terrorist attacks to probe the preparation, response, and recovery efforts surrounding the storm. “For it to never happen again, we need the facts, and that is what we are asking for today,” Velasquez said. Several members of the caucus criticized President Donald Trump and federal officials for their response to the storm. “Puerto Rico is clearly President Trump’s most significant failure in his administration,” said Rep. Adriano Espaillat, D-N.Y. Puerto Rican officials have said they always expected the death toll to be higher than 64, but Democrats want to know why the initial count was so low, why it took nine months to produce updated figures, and what impact the perception that so few people died had on the urgency of the government’s response. “Why would somebody give an undercount in a disaster?” asked Rep. Bennie Thompson, D-Miss. Members pointed to Trump’s comments while visiting Puerto Rico after the storm that the island should be “very proud” that so few people died in comparison to “a real catastrophe” like Hurricane Katrina in 2005. The new data places Maria’s death toll either higher or just a few hundred victims below Katrina’s devastation. “Yes, Mr. President, this is a real catastrophe,” Velasquez said. White House Press Secretary Sarah Sanders defended the administration’s handling of the storm at a press briefing Tuesday. “The federal response, once again, was at a historic proportion,” she said. “We’re continuing to work with the people of Puerto Rico and do the best we can to provide federal assistance, particularly working with the governor there in Puerto Rico. And we’ll continue to do so.” Caucus members were unconvinced. Rep. Ruben Gallego, D-Ariz., accused the White House of treating Puerto Ricans like second-class citizens. “If this had happened anywhere on the mainland, it would be a national controversy,” Gallego said. Democrats noted that President Trump is meeting with FEMA officials Wednesday as the new hurricane season begins, and they urged him to seriously reassess the government’s preparation in light of the new data on Puerto Rico. “This is not the time for self-congratulations and victory laps,” Velasquez said.’

It is time for Steven K. and CoRev to get their spin ready for Devon Nunes & Co.!

Pgl, with you superior skill set could you explain the logic in the statement” “Puerto Rico is clearly President Trump’s most significant failure in his administration,” said Rep. Adriano Espaillat, D-N.Y. Puerto Rican officials have said they always expected the death toll to be higher than 64, but Democrats want to know why the initial count was so low, why it took nine months to produce updated figures, …” Trumps is now responsible for local death count/estimates and their reporting time frame? You’ve been given the opportunity to suggest how the response could have been better. Show us!

Rep. Adriano Espaillat, D-N.Y has every right to see this utter failure to be important to the people he represents. Of course we all know Trump has so many massive failures in leadership, it is difficult for me to weigh which one was the worst. But leave it to you to defend his serial and massive incompetence at every turn.

You also suggest that the Federal response could not have been better? My – you have the lowest bar ever for what FEMA could or should have done. Even Brownie could outshine your pathetic expectations.

Pgl, “You also suggest that the Federal response could not have been better?” Where, when? Or is this going to be another NY SENT aid the day after (or was the 2nd day) the hurricane struck.

I do remember saying something like: no one has shown exemplary response to this disaster. Maybe that kind of comment isn’t vituperative enough for you, and gets translated to “could not have been better”, if so you are seriously in error or have a reading comprehension problem.

@pgl,

Lot of good the 9/11 commission did! Being an cold war supporter of fighter interceptors the least I expected would be two ready launch alert F-15’s in West Moriches and 4 F-15’s on continual 5 minute response…………….instead just a NY ANG rescue unit, which could have formed a tiny fraction of the rescue response that could have “adequately” covered Maria.

The comments in Menzie’s posts remind me of replies to Trump tweets. Further bolstering my assertion that Menzie and the Donald share a rather large (albeit unfortunate) number of characteristics.

To contribute to this dumpster fires of an otherwise fine blog (when will Dr Hamilton post again?!?!?), I have a theory. My theory is that Moses Herzog is Menzie’s alter, his “burner account” (since I referenced twitter) for econbrowser. Maybe Menzie is the Kevin Durant of econblogs. I go back and forth between ignoring Moses Herzog and not only due to being amused by this theory. Although truly I can’t imagine anyone would/could? purposefully act in such a way but with enough data points, it’s not difficult to make an elephant wipe its ass with its trunk.

Being “Menzie’s alter” is a great and high compliment for me (which I haven’t earned), but I don’t think Menzie deserves that low swipe.

Kopits: “So, HPSH conducts a survey of 4,000 households who claim that Menzie Chinn is 17.5′ tall, with a 95% confidence interval of 1′ to 34′. “

As ridiculous as your example is, it provides better information than armchair analyst Kopits who says that the study is garbage because he estimates Menzie Chinn is somewhere between 6 and 7 inches tall.

Kopits is suggesting he got a Master of Finance from Columbia U. I know some of the faculty there. They have to be hanging theirs heads in shame that they gave him a degree.

Steven Kopits As a government worker I was bound by the National Institute of Standards and Technology (NIST). According to the NIST handbook confidence intervals are described as follows:

…if the same population is sampled on numerous occasions and interval estimates are made on each occasion, the resulting intervals would bracket the true population parameter in approximately 95 % of the cases.

https://www.itl.nist.gov/div898/handbook/prc/section1/prc14.htm

That’s not quite the same thing as what you’re saying. Notice that it’s about intervals (plural) bracketing the true population (not sample) parameter.

I always love the expression “good enough for government work”. But at least the government has certain standards. I’ve read enough of the garbage from Kopits to realize whatever standards he was supposed to have learned at Columbia went out the window years ago.

That’s fine, Slugs.

But there’s a difference between mechanically applying a test and thinking about what you are doing and the real world implications.

For a report which will draw national attention, you have to think about the wider public and political context. So we’re looking for a narrative, not just a brain dead statistical analysis. If you’re thinking about 4645 in December and the most recent ‘actual’ is 1250 in October, then you have some decisions to make. Is the October number good? How far off could it be? If it’s good, is an additional 3400 deaths in Nov / Dec plausible? Are death rates increasing or decreasing? Are they going vertical? If the October numbers are wrong, what could make them wrong? How could we check? We indirect evidence might we expect to see? Does the government have an incentive to lie to us? Would they do that? Why? Who would know if they’re lying? Can we talk to them? How do numbers roll up? Can we make a judgment about lags from revisions?

There’s a series of questions you ask here, right?

Now, I don’t expect junior people to know all this stuff. It’s quite routine to have young analysts bring in work which, of itself is ok, but way off mark in the larger picture. And there’s absolutely nothing wrong with trying something new and maybe a bit flaky. That’s all ok.

But for any project like this, there is supposed to be a senior manager who can look at a column of numbers or a graph and say, “Doesn’t look right to me.” There was a complete failure on this front with the Harvard study.

“So we’re looking for a narrative, not just a brain dead statistical analysis. ”

if you are publishing in peer review journals, a “narrative” would be excluded immediately.

CoRev You asked: What can the Harvard estimate be used for in the REAL WORLD?

Lots of things, but one of the most obvious is that it can be used to inform future policymakers of a plausible range of casualties when planning for future hurricanes or natural disasters. It’s common to assign a statistical value of a human life. If you know that 8000 deaths is plausible within a 95% confidence interval, then that would justify prepositioning more FEMA and DoD assets than could be justified if you believed a number like 64. Put another way, it could be used to justify a budget submission. It’s not all that different from the kind of thing DoD does when building war reserve stocks. Some assets are positioned in theater. Some are “afloat” on prepositioning ships, while other war reserve stocks are held in CONUS depots. No one pretends to know exactly how many spares and repair parts will be needed for the next conflict, but that doesn’t prevent us from running lots of simulations that ultimately feed a confidence interval. It’s up to policymakers to decide how much risk they want to take, but at least policymakers are provided with those risk estimates. And the cluster analysis approach used in the Harvard study could be used to preposition assets where they’re most likely to be needed.

2Slugs

Long ago and in a different costume, I was a staffer in a military command, I monitored reports of fill rates from our units on their deployment “kits”.

During the Reagan build up one of the things that happened was they freed those kits from limits due to funding…. war reserve spares are funded separate from operating assets.

A new more munificent supply “list” came out and all my units of a certain type weapon system were no longer rated as “combat ready”!

The acetate slide went up, the general looked over at the company grader in the second row, I suggested that last week we were combat ready now “with more money we are not combat ready”…….

I did not get fired! I could not see my boss’ face, but he did not chew me out! I knew way too much about what those “kit lists” meant!

Aside those sparing models are optimizations and the choices of object function and constraints were hotly debated.

Confidence in the underlying models result from accrediting the models something stars has for about 1/3 of the models they sell their stock with.

ilsm I also served a brief time as a “346” analyst many years ago. I don’t want to get too far off the blog post topic, but I’ll make a few comments.

Yes, war reserves are funded (or not!!!) separately from peacetime assets. Peacetime optimization models use relatively simple steady-state approaches and tend to focus on fill rates assuming either a compound (or “stuttering”) Poisson or negative binomial waiting time distribution; but wartime models require much more complex dynamic modeling. And almost no one in senior leadership understands them because the math is just way too complex, so the briefings get dummied down to the point of being useless.

The optimization target itself is not all that controversial within the technical community, but it tends to get very confused within leadership. Fill rate targets and mission capable rate targets and operational availability targets all get blurred into the same thing in the minds of general officers. Most readiness-based sparing (RBS) models focus on operational availability, which is calendar based. What gets reported on status charts is either the downpart fill rate (an input to the RBS model), or the mission capable rate, which is a unit level metric based on the percent of the fleet.

2Slugs,

Yes, there are a lot of things ‘346’ do over 40 odd years.

In the end the ‘decision maker’ needs options, I observed a lot about styles. Which is why the boss needs to decide if he/she is a min-max’er or a max-max’er. Regret functions etc.

2slugs, finally! And believe it or not we agree with a huge but. But, as i said in an earlier comment how do we assure legislators don’t under or over shoot? What you have set is the range (CI if you insist) is of 64 to 8,000, and to use that large number you also believe it to be plausible. Being within a large CI does not necessarily add plausibility. The core story in these series of articles has to do with the plausibility (seeming reasonable or probable) of the Harvard Study’s estimates regardless of its huge CI. The plausibility of the PR’s estimate of 64 deaths was already known. When the Harvard study authors say they wanted an independent estimate from the PR numbers, they tried a survey approach which has a long history of implausible results, voter surveys at elections are the most obvious.

In one example you have discredited the Harvard study, FEMA administrators and legislators in developing budgets by assuming the issues were not known or at best well understood. You and I both know that to be untrue and shows the Harvard authors did not know that, or had alternative agendas.

You, and the other Harvard Study believers trying to blame Trump have treated Hurricane Maria as a singular event, with your claims of “prepositioning more FEMA and DoD assets” could have been better. That is always true in after action reporting, but those estimates have also to accept the uniqueness of the Maria situation. It was the 3rd hurricane to pass through the Caribbean in a short period, using much of those prepostioned resources, was a direct hit impacting the entirety of PR, PR’s infratstrucure was already near diaster without the impact of a major storm, many FEMA and DoD resources were already engaged in recovery operations in the previous two hurricanes, and finally the infrastructure of PR was essentially destroyed, a massive disaster. It was not a singular event and was well outside the high norm for even the experienced planners at FEMA and DoD.

Reliance on a large CI for for estimates do not add much value as you have just indicated. Insisting that they do alerts many to ulterior motives of those insisting, and having a history of Trump bashing identifies the probable motive. Starting a series of article by bashing Trump confirms the original motive. When that article starts with the reference to the Harvard Study: ”

Heckuva Job, Donny! Puerto Rico Edition

35 Replies

From New England Journal of Medicine, results of a study led by researchers at Harvard T.H. Chan School of Public Health and Beth Israel Deaconess Medical Center:” and the series of articles are attempts to justify that study after being seriously questioned, show the intransigence of the author to bash Trump.

After all these articles and comments the best use of the Harvard estimates is a potential “to inform future policymakers of a plausible range of casualties” where plausibility is the core question of the study.

CoRev I believe the whole point of a confidence interval is to give policymakers a feel for what is plausible. The fact that DoD and FEMA consistently underestimated the potential for disaster illustrates exactly why we should pay attention to the Harvard study. If your understanding of confidence intervals is such that you believe the midpoint is more likely than the edge of the confidence interval, then you’re misunderstanding what a confidence interval means. The true parameter lies somewhere between those intervals…you just don’t know where. We’re not talking about central tendencies here.

2slugs, exactly my point: “The true parameter lies somewhere between those intervals…you just don’t know where. We’re not talking about central tendencies here.” It is why I have used range, a more often used term, than CI. Relying on a range large enough to steam a hospital ship through does not add confidence to the estimate, 4,645 reported in the Press Release and the Study. Plausibility (seeming reasonable or probable) loses confidence for actual use, especially so for funding legislation.

It haws been you stats folks who assumed we didn’t know what a CI was. That never was true, but what we did realize was of how little use was the Harvard Studies numbers, low, mid-point, and high for any real world use. (How many more times does this need to be repeated?) It was that realization that caused the characterizations, Menzie, and his followers took so much umbrage.

Not once in this series of articles and comments have you folks admitted that, only assuming we didn’t understand, or that peer review protected us from in some way from these useless Studies being printed. It doesn’t!. Never has! And is worse in some sciences, especial medical, where a huge number of such studies are too often identified.

Y’all have been tilting at windmills for no purpose.

CoRev: “…useless Studies…” Has randomized capitalization propagated from Mr. Trump to you? Or has “study” become a proper noun when I wasn’t watching. And what’s with the period after the exclamation point?

“It is why I have used range, a more often used term, than CI.” No, I’d say “range” allows you to be lazily ambiguous about the extent and nature of your uncertainty. Please, please, please, I beg of you, read a basic stats textbook.

Menzie, this is from the Harvard Study:

“RESULTS

From the survey data, we estimated a mortality rate of 14.3 deaths (95% confi

-dence interval [CI], 9.8 to 18.9) per 1000 persons from September 20 through

December 31, 2017. This rate yielded a total of 4645 excess deaths during this

period (95% CI, 793 to 8498), equivalent to a 62% increase in the mortality rate as

compared with the same period in 2016. However, this number is likely to be an

underestimate because of survivor bias. The mortality rate remained high through

the end of December 2017, and one third of the deaths were attributed to delayed

or interrupted health care. Hurricane-related migration was substantial.”

What is the study’s estimate of excess deaths?”

Menzie, my understanding of of CIs is contained in this definition:

“Interval estimation

statistics

Written By:

The Editors of Encyclopaedia Britannica

See Article History

Interval estimation, in statistics, the evaluation of a parameter—for example, the mean (average)—of a population by computing an interval, or range of values, within which the parameter is most likely to be located. Intervals are commonly chosen such that the parameter falls within with a 95 or 99 percent probability, called the confidence coefficient. Hence, the intervals are called confidence intervals; the end points of such an interval are called upper and lower confidence limits.

The interval containing a population parameter is established by calculating that statistic from values measured on a random sample taken from the population and by applying the knowledge (derived from probability theory) of the fidelity with which the properties of a sample represent those of the entire population.

The probability tells what percentage of the time the assignment of the interval will be correct but not what the chances are that it is true for any given sample. Of the intervals computed from many samples, a certain percentage will contain the true value of the parameter being sought.”

The Harvard Study cited the interval, or range of values to be 793 to 8498 with a mean of 4645, which they used in plain english sentence: “This rate yielded a total of 4645 excess deaths during this period (95% CI, 793 to 8498), equivalent to a 62% increase in the mortality rate as compared with the same period in 2016.”

My points are two.

1) Which of these numbers best represents the Harvard Study’s estimate of excess deaths?

2) If the official estimates are considered bad in the time frame studied, why are the estimates for the same period in 2016 considered better or good?

You’ve written six articles now. The first was based upon this study, and several others ridiculed or berated those who disagreed with the study results or overall process, while defending the study. Yet, these points are still not provided, except from those who disagreed with the study or your interpretation of its value.

As for your questions regarding my typing errors, the answer is old eyes and small print and probably copying a word. As for my uncertainty, extent – I have not done an estimate only cited others, so their’s is mine, and I think the nature of my uncertainty I have made clear. Reliance on a study where the CI is so large has little utility for Real World use. I remain unconvinced.

i have a simple question for steven, corev, bruce, and others. have you actually read the harvard study in the nejm?

let me belabor a question posed to steven again. many of you in the conservative echo chamber have no problem using biased “reports” with an agenda, which would not make it through typical peer review processes due to lack of transparency and factless propaganda. steven went so far as to say the authors of this report should have considered the audience response when producing its conclusions. really, you want that in a peer review process?

looking over the paper (it is not a bought and paid for report from a think tank), it appears it has passed the criteria needed from a peer review process. it attempts to explain the data collection and statistical details used, at least within the limitations of a page limit. i did not see anything nefarious in this paper which should attract any of the ridicule coming from the conservatives. the only fair argument, which none of you have really used, is that the sample size was not large enough. this produced a confidence interval which is larger than desirable. on the other hand, that is simply an outgrowth of the experiment. the data collection process resulted in those numbers. you don’t like them, fine. do you want them to change the numbers? the only way to do so is to gather more survey data. you don’t get to consult with a “senior manager” about the results, and how to skew them one way or another-steven this is directed at you. at the same time, perhaps you could also complain that the puerto rico authorities have not done enough to gather and examine bodies-which is necessary for them to increase the death count as well. this complaining about the harvard study, calling it “garbage”, appears to be without merit. unless you have a political agenda to achieve.

Baffled, yes. Infact i ususally had the Press Release and the Study open while responding for the past couple of Menzie’s articles.

We disagree. Maybe you can find a better use than 2slugs for the metrics it provided. I’ll concede it use as an academic exercise.

Baffs –

The errors in the report come from a number of different directions, but not, I believe, in the mechanics of report construct.

Here’s what I think — but don’t know — went wrong.

1. Mortality incidence was low, less than 1%. The made the survey vulnerable to response distortion. We know that money is at stake. If someone died from the hurricane, then the govt pays funeral expenses. Now, it’s not impossible that 13 of the 4,000 respondents thought that those reporting a death would receive a payment from the govt. I know, it sounds crazy, but in my Hungarian experience, in any hundred people, 3 were total wackos. Could you get a dozen wackos in 4,000 surveys? You could.

2. Stratified sampling. The authors tried to mimic the national distribution in the survey. If there happened to be a particular area with higher than average mortality and they sampled that heavily, it could distort the numbers.

Whatever it was, the approach didn’t work. The CI is too large to be really useful, and the central estimate is way off, much worse than, say, simple visual estimation based on the last few ‘final’ months of data. (Assuming no missing bodies or govt lies.)

The other mistakes were not of the analysis per se, but of project management. The first was the failure to reconcile the statistical results to known actuals. Had the authors done that, of course it would have gutted their findings, but they would not have ended up in this controversy. Similarly, had the authors contacted the PR authorities up front, they probably would have gotten the updated death numbers before publication. Those were two major mistakes, but neither related to statistics per se. They were related to common sense project management. If was the project manager’s fault, in other words.

“The first was the failure to reconcile the statistical results to known actuals.”

steven, you and i appear to have two very different levels of confidence in those “known actuals”.

the study chose to ignore people designated as missing. i.e. the assumption is that none of the missing were dead. rather conservative approach, which you should applaud. they also did not count single person households. both of these items would increase the death toll if counted. this makes the “known actuals”, which are not “known” at all, all the more suspicious.

“They were related to common sense project management. ”

this is a scientific study. not a report due to a client. you may have a habit of distorting your work to the satisfaction of the client, but that would be highly unethical in a scientific, peer reviewed and published study. you publish the results you obtain, you do not finesse the results to your liking. steven, you have a failure to understand where this study was published and what that represents.

Baffled, you’re fixated on the number 64, official death count. As I’ve pointed out to you several times now, that number was known to be low by many PR Officials. We didn’t need a study hypothesized confirming an already known and accepted issue. Death counts are almost always estimates with error bounds, just as it is TODAY with Katrina.

So the obvious value of an independent study is not to confirm a HIGHLY SUSPECTED – KNOWN ISSUE, but to estimate how much, because in knowing that with some precision there is value. This study failed that need.

With these two problems added to Steven’s list of issues, the value of this study is greatly diminished to almost nil, with the exception of it using another seldom used approach, an academic value.

Baffled, you just admitted that you never read the lead reference in the article which started all this furor. Why do you think the response to the Study was so vehement? Did you actually believe we had not read it?

i read it, corev. your complaints about the study are inaccurate. an outcome of the study indicates the need for the government to get boots on the ground quickly after a significant natural disaster in order to get a handle on the magnitude of the crisis. the more boots, the better the understanding of the magnitude of the crisis. relying on passive accumulation and assessment of deaths from the coroners office presents a false understanding of the magnitude of the crisis at hand. this is a very clear outcome and REAL WORLD use of this study. now are you going to stand in the way of government funding to conduct such a survey, so that we do not underestimate the magnitude of future events and appropriate responses. or are you happy to let the government determine a response based upon 64 deaths without spending any additional funds?

Baffled,reading comprehension, please. 64 deaths has been updated, why do you insist on using this old number. Before Harvard even started its data collection other studies estimated deaths many times higher than the then official number. PR officials were admitting that their official numbers were incorrect. The issue was how incorrect were the official numbers. Can you tell me what the excess deaths estimate is from the Harvard Study?

Another Duh comment: “an outcome of the study indicates the need for the government to get boots on the ground quickly after a significant natural disaster in order to get a handle on the magnitude of the crisis.” You seem to think this is a new or unknown issue. It is true for every emergency and more so in disasters. But early boots on the ground is usually performed by FIRST RESPONDERS, which are overwhelmingly the responsibility of local government.

The remainder of you comment is just more nonsense.

CoRev: To be precise, the 64 count has not been updated. That number pertains to deaths attributable (direct, in other words) to the hurricane. The coroner has to make the determination that the death is say due to flying debris. The additional official information regards overall deaths, from which one can infer excess deaths relative to usual.

Menzie, I’m not sure that the 64 deaths are direct deaths. i saw one article that the last two numbers added were in fact indirect deaths.

“”These deaths that are certified today as indirect deaths related to Hurricane Maria are the result of investigations into cases that have been brought to our consideration,” DPS secretary Hector M. Pesquera said in a statement. ”

It also includes this: “The official death toll remains heavily scrutinized by critics, though, who believe the figure is significantly higher. “, confirming my statement that the excess/overall deaths were thought to be low. And, known before the start of the Harvard Study

https://abcnews.go.com/Lifestyle/wireStory/yellowstone-head-trump-administration-forcing-55722252

Dec 10, 2017, 2:32 AM ET

The DPS release was Saturday, 6 December 2017.

To the best of my knowledge, the 64 number is the correct number associated with direct hurricane fatalities. I don’t anticipate that will change.

The excess deaths come from indirect fatalities principally associated not with the hurricane, but with a loss of power. The loss of power is attributed to the hurricane, or maybe PREPA bankruptcy, or maybe a lack of mainland support.

The Milken Institute, as I understand it, was specifically retained to work through the death certificates and come up with a new protocol assigning deaths to the hurricane. Bear in mind that approximately 40% of the deaths in the Harvard survey were attributable to the hurricane. Thus, if the official death numbers hold up, 3,600 of 9,500 total deaths from Sept 20 – Dec 31 would be attributable to the hurricane on excess deaths of only 1400. Which is a problem.

For this reason, I might be inclined to go with a years-of-life approach and try to calculate the actuarial years of life lost to the hurricane and subsequent events. This should be feasible, or at least feasible-ish. Thus, if a woman of 80 years of age had a heart condition, we could calculate the anticipated years of life remaining, and deduct the actual from that. Thus, if she would have lived 12 months actuarially, but died three months after the hurricane, then we could calculate the years-of-life lost as 0.75. You can then take the full benefit to be paid at some agreed number, say, 20 years of life, and pay, say, 3.75% of the total death benefit due in this case. If if the total benefit is, say, $3,000, then the payment in this case comes out at $112. This would finesse the attribution issue, as it allocates partial blame in some sense without making any definitive claims about attribution. If spreads the money shallow but wide, instead of deep and narrow.

I don’t insist on using 64, the government does. Why doesn’t trump insist on revising it to a more accurate number. For instance , the study had a lower bound of about 800 deaths. Probably a more realistic number than 64. It is included in the study, but you seem to want to overlook or complain about the usefulness of the study rather than use itS RESULTS in the REAL WORLD. In a previous post, I asked you to compare Maria and Katrina deaths. You pulled all kinds of gymnastics to avoid answering that question. In the context of the numbers just posed, it is a very relevant question. Corev, Steven, ed and others have this fascination with bashing the academic ivory tower. I presented you with an opportunity to compare this event with another significant event as a sanity check. You all failed this real world check because of a preconceived (and incorrect) notion that the Harvard study was garbage. You all simply act like the party of no.

Baffled, you complain I chose not answer an unanswerable question, thile you did the same thing. You chose NOT TO USE numbers of deaths even for Katrina let alone those in flux for Maria and just Puerto Rico. I hope you realize Maria passed through much of the Caribbean as well as part of the US.

Where is your Katrina number?

corev, you simply cannot accept when you are wrong. you continue to did deeper. as a refresher for your simple mind, i will restate the simple question here. i answered it in the previous post, y’ano.

“corev, do you think maria or katrina produced more deaths?”

simple question with a simple response requested. and all you do is come up with silly excuses to avoid providing an answer. what a loser.

Baffled, you’ve been baiting me with this question for days, and gotten the same answer. I don’t know. All of this in a was done in comments from several articles about the lack of precision in the Maria death estimates. During these discussion of Maria we saw how imprecise were the Katrina death estimates to this day. And you persist? What a horses [sic] pet****. [edited by MDC]

https://www.facebook.com/TheHorseMafia/videos/1595018727197525/UzpfSTEwMDAwMDI3Mzk0MjIyMzoxOTU1MDAxNzI3ODUyMjcz/

simple question corev. what a loser, y’ano.

CoRev It is why I have used range, a more often used term, than CI. Relying on a range large enough to steam a hospital ship through does not add confidence to the estimate, 4,645 reported in the Press Release and the Study. Plausibility (seeming reasonable or probable) loses confidence for actual use, especially so for funding legislation.

A range is not the same as a CI. A range refers to the difference between the highest valued observation and the lowest valued observation. But I suspect you actually have in mind a gut feeling as to what is plausible. That’s not what I’m talking about. A CI provides policymakers with a statement of risk. In the case of the Harvard study it says that there is a 5% chance that the true number of deaths is outside the CI interval. The Harvard team could have provided a 99.9% CI, but assuming policymakers are that risk averse would be implausible. I’m talking about the plausibility of risk tolerance, not the plausibility of the number of deaths.

All that said, I do have a couple of criticisms with the Harvard study. For one thing, it would have been helpful to policymakers if they had provided alternative CI’s; e.g., an 80% CI and a 90% CI. A 95% CI is common in academic studies, but there’s nothing sacred about it. I also have some qualms about their assuming a Poisson distribution. The Poisson assumes a constant and time invariant arrival rate (or “lambda” value). Looking at their charts it looks like the “lambda” value was dropping with time. The Poisson also assumes that only one event can occur at the same time. That might not be true. Another problem is that their methodology is vulnerable to a “zero inflate” problem; i.e., an excess number of zero fatalities in the household.

https://en.wikipedia.org/wiki/Zero-inflated_model

I’ve got some other issues as well, but this thread is already way too long. And I don’t want to be too hard on the researchers because some of my concerns border on nitpicking.

2slugs, the core issue is plausibility of the number of deaths, official and otherwise. Join us. 😉

CoRev For purposes of this sub-sub-thread the issue I addressed was an answer to your question regarding the usefulness of the study for future planners. As to the plausibility of their estimate of the actual number of deaths in Hurricane Maria, here the issue is that age old battle of head versus gut. Your gut might tell you that 4600 is too high, but given their assumptions and methodology the math says it’s not.

Forget about the central number. The lower bound of 800 deaths is still an order of magnitude greater than the official toll of 64. And 800 does not seem unreasonable. The study allows one to say, at a minimum, we had 800 deaths. That does have real world value. And brings further criticism to the government numbers. Steven, as for your garbage comments, is 800 deaths a garbage estimate?

Baffled, 2slugs, pgl, Joseph, Menzie etc all of you defending the Harvard Study, Glenn Kessler (WaPo’s Fact Checker) has a new article on it, and repeats most of the arguments we conservatives have used here against the value of the study. It is not on the web yet,only in print. When it is avail;ab;e I will link to it.

I doubt it will change any of your minds, but it will show that it is being panned in professional circles.

Baffled only you have focused on the 64 deaths number. It has been know to be in error long before the Harvard Study, and Puerto Rico commissioned GWU to develop another study for more definitive numbers. Maybe, once that study is released we will be able to judge which hurricane was more deadly.

steven and corev are permitted to use 64, the official death toll, as a baseline to criticize the harvard study. but apparently i am not allowed to use the same number. interesting corev. should i simply make up an official death toll and use that? you seem to do that with numbers in the past on many other topics, perhaps that is a better approach. easier to make you argument that way, y’ano?

the harvard study shows with 95% confidence limit that there are at least 800 deaths attributed to maria. there is real value in that number. once again, your criticisms of the study are more due to your bias against academics than anything else. that applies to both corev and steven. explain to me how knowing at least 800 deaths attributable to maria is garbage and not of any REAL WORLD value. how is that worse than accepting 64, which you acknowledge as a incorrect and an undercount? your arguments against this study are simply garbage. you two are accepting a knowingly incorrect number, off by probably an order of magnitude, and you have the audacity to sit in your air conditioned chairs and grin as you complain somebody else’s report is not comprehensive enough. sad.

Slugs –

A Poisson distribution for deaths by date is probably correct. In fact, the data follows such a pattern, with an early acceleration and an inflection point already in October and a leveling by November, with some incremental creep into 2018. So that assumption looks fine, but you have to be careful about your views on how that curve is developing. If you start from the 4645 central estimate, then the shape of that curve depends strongly on the ‘actual’ anchors used, if any. For example, if you thought the October actuals were solid but still held with 4645 for the year end estimate, then the inflection point falls into November and the function peaks substantially above 4645 in, say, February or March.

Also, the distribution of deaths by date should not be confused with the estimates of total excess deaths as of Dec. 31. This latter estimate would likely have a normal distribution around a mean. Given that the central estimate is the average of the two CI limits at 95% confidence, the implication is that they used a z score with a normal distribution. That would be the plain vanilla approach, unless otherwise noted.

I don’t think that’s how or why they used a Poisson. The Poisson assumes a constant lambda value with independent arrivals. I think they used it out of convenience more than anything else. A Poisson has a lot of nice properties that make it easy to work with. For example, notice in the supplementary tables where they take the square root of the death rate per 1000; i.e., the square root of lambda. That’s because the variance equals the mean rate, so the standard deviation equals the square root of the mean rate. And since the discrete Poisson becomes the normal as you scale things up, this made it easy to come up with a quick and dirty estimate of the standard error.