Today we are pleased to present a guest contribution written by Dimitrios Kanelis (Westfälische Wilhelms-Universität Müun) and Pierre Siklos, (Wilfrid Laurier University and CAMA at ANU). The views expressed here are their own and do not reflect the official opinions of the institutions the authors are affiliated with.

An obvious source of unscripted information is a person’s voice since the voice does not necessarily deliver the same message as the words. Gorodnichenko et al. (2020) provide evidence of how vocal emotions of the Federal Reserve Board chair have significant effects on on stock prices in the days following FOMC press conferences. Complementary studies by Curti and Kazinnik (2021) and Alexopoulos et al. (2022) estimate real-time effects of the Fed’s chair’s facial emotions on stock prices.

In our paper, we estimate the effect of vocal emotions and language during the Q&A sessions of the ECB press conferences under Mario Draghi’s presidency on the yield curves of the four largest euro area economies as well as spreads vis-à-vis German yields. We conduct an event study and construct a novel data set consisting of timely synchronized audio and textual data for press conferences between May 2012 and October 2019.

One challenge is that Draghi answers several questions in a row on totally different topics. Therefore, we exploit an interesting characteristic of the ECB press conference transcripts. The ECB staff identifies focal points and structures these in writing. Following this structure, we adjust the audio data for each answer and establish synchronicity between voice and words. We then implement the Fully Convolutional Neural Network (FCN) of García-Ordás et al. (2021), which has the special property that it processes audio files with non-fixed length.

To measure the unscripted language content used in the press conferences, we implement Fin-BERT. This large-language neural network model can analyze economic and finance-related text and goes beyond the word-counting approach of dictionary methods. We use data from France, Germany, Italy, and Spain.

To quantify the emotions and generate a numerical variable for our event regression estimation, we utilize methods developed in SER, a sub-area of machine learning (Pérez-Espinosa et al., 2022). Recently, economists have started to utilize SER to analyze the vocal sentiment of the Fed chair to estimate the effects on asset prices (Gorodnichenko et al. (2020); Alexopoulos et al. (2022)).

In contrast to Gorodnichenko et al. (2020), we utilize a Fully Convolutional Neural Network (FCN) based on García-Ordás et al. (2021), which generates a higher Out-of-Sample accuracy and has clear advantages when measuring emotions during Q&A sessions, which are characterized by answers with high variability in length. These emotions are Neutral, Calm, Happy, Sad, Angry, and Suprised, and they are available in two different intensities (nor-mal emotional intensity and strong emotional intensity).

During the European Sovereign Debt Crisis (ESDC), the voice sentiment is found to be continuously negative. An exception is the press conference on 2 August 2012, which is the most positive moment during the crisis and is observable a few days following Draghi’s famous “Whatever it Takes” speech which is considered a turning point during the ESDC. Following the end of the ESDC, a temporary increase in vocal sentiment is observable before it becomes again more negative during most of 2014, a challenging year for the ECB Governing Council due to the environment of low inflation and economic growth, increasing financial fragility and risks of un-anchoring inflation expectations. The introduction of the Asset Purchase Programme (APP) goes along with a more positive vocal sentiment, possibly due to Draghi’s success in pushing through unconventional monetary policies despite the controversy surrounding of the policy inside the Governing Council (Brunnermeier et al., 2016). The decline in the average vocal sentiment is observed again during 2018, a time of increasing policy challenges and reaches a new low when the ECB restarts its QE program, only a few months after the Governing Council started the beginning of an attempted exit.

To illustrate, the slide below is an example containing positive, neutral, and negative sentiments (all are possible within the same press conference) uttered by Draghi according to our methodology. Readers are asked to determine if they agree with the emotion displayed by Draghi as identified by the methodology used (answers are provided in the caption below the slide). Actual illustrations are also identified for comparison. (You have to download the PPTX file, click on speakers to hear the audio.)

Click on link below to download PPTX with audio imbedded: Siklos_Econbrowser_2023

Note: the first audio clip is neutral and from April 27, 2017; the second is positive from 1st the press conference about forward guidance on July 4, 2023; the last clip is negative and is from April 15, 2015 when an activist jumped on the table.

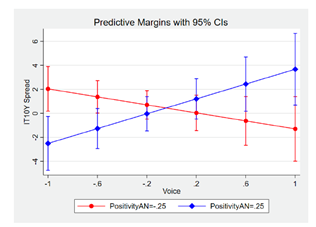

Among the hypotheses tested is whether positive emotions raise yields whereas negative emotions reduce yields. We estimate a significant positive effect of the change in the framing of the press conference on the spreads, that is, a positive change in the statement leads to a larger spread while a negative rephrasing leads to a declining spread. Marginal plots measure the effects of vocal emotions given a specific level of positivity in the language used during the Q&A session. This allows us to identify more subtle influences on yield spreads than is provided by regressions alone. The figure below shows the marginal effect of vocal emotions on the ten-year Italian bond spreads.

Note: These plots visualize the marginal effect of a change in Voice at time t given a specific level of PositivityAN, also at time t , on the spread of ten-year government bonds from Italy. We report the change in spreads in basis points (i.e., in the range -25 to +25).

The interplay of vocal emotions and language during the Q&A session has a significant and asymmetric influence on the spread of Italian bonds. The combination of negative vocal emotions and negative language leads to an increased spread. For example, ten-year Italian bonds also react differently, depending on the degree to which voice and language signals conflict with each other. A positive-positive combination of voice and language and a negative-negative combination leads to an increase in the spread, while more conflicting signals reduce it. In the case of Germany (not shown), non-scripted communication has a positive effect on yields. However, this effect is limited to the short end of the yield curve and is asymmetric regarding the kind of vocal emotion. More positive communication signals an increase in German bond yields. The paper also discusses the impact of Draghi’s emotion and the content of ECB press conferences on French and Spanish bond yields.

One bottom line of the paper is a reminder that communication goes beyond just words. Future research should consider how financial markets perceive and process vocal cues during crises like the COVID-19 pandemic or the rising inflation since 2021 and how central bankers’ emotions can influence asset prices in times of rising economic and geopolitical uncertainty.

The paper is available from https://cama.crawford.anu.edu.au/publication/cama-working-paper-series/20867/emotion-euro-area-monetary-policy-communication-and-bond.

References

Alexopoulos, M., Han, X., Kryvtsov, O., Zhang, X., 2022. More than Words: Fed Chairs’ Communication During Congressional Testimonies. Working Paper.

Brunnermeier, M.K., James, H., Landau, J.P., 2016. The Euro and the Battle of Ideas. Princeton University Press.

Curti, F., Kazinnik, S., 2021. Let’s Face It: Quantifying the Impact of Nonverbal Communication in FOMC Press Conferences. Working Paper Draft: March 18, 2022.

García-Ord as, M.T., Alaiz-Moretón, H., Benítez-Andrades, J.A., García-Rodríguez, I., García-Olalla, O., Benavides, C., 2021. Sentiment Analysis in Non-Fixed Length Audios Using a Fully Convolutional Neural Network. Biomedical Signal Processing and Control 69.

Gorodnichenko, Y., Pham, T., Talavera, O., 2020. The Voice of Monetary Policy. Working Paper Draft: June 14, 2022., forthcoming in American Economic Review.

Pérez-Espinosa, H., Zatarain-Cabada, R., Barrón-Estrada, M.L., 2022. Emotion Recognition: From Speech and Facial Expressions. chapter 15. Published In: Torres-García, Alejandro A. and Reyes-García, Carlos 1 and Villasenor-Pineda, Luis and Mendoza-Montoya, Omar (Ebs.): Biosignal Processing and Classifiation Using Computational Learning and Intelligence. Elsevier.

This post written by Dimitrios Kanelis and Pierre Siklos.

I’m utterly surprised this blog post did not draw more comments. I was recovering from a drinking binge so kinda missed this one. This is a very fascinating topic, and maybe bears more treading over. I’m not completely against further study of this topic, but I have mixed feelings. It almost seems….. “personally invasive”?? I think to some degree, when someone takes on a very public and high profile job that they forfeit some privacy. But….. I have to say if I had Draghi’s job or similar such job. I would find it somewhat disconcerting and borderline troubling that people were dissecting the minutia of my voice inflections, to make market moves. I hate to word it this way, but…… borderline “creepy”. but it is a public job….. so, I don’t know…….

I’ve always been ultra-sensitive to privacy issues even as a little child, so, I can’t get used to this generation of mobile phone video etc. Maybe showing my age?? I don’t know.