Reader rsm responds to my citation of GDP and Human Development Index data thusly:

Why not include the standard errors?

Again, because they would be so wide, you could tell any story and back it up with data?

Besides being an incredibly nihilistic statement, it’s also a generally ignorant one.

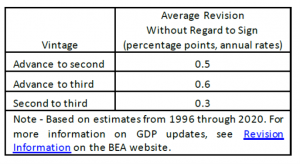

The simple answer is, there’s no such thing as a standard error on a GDP estimate, at least not in the sense of classical statistics. On the other hand, that doesn’t mean one shouldn’t try to convey the imprecision in GDP. And indeed, the BEA reports in each and every GDP release the following table:

Source: BEA.

So the BEA reports the imprecision with respect to subsequent revisions. It’s not a standard error — I don’t even know what it means to think of a standard error that represents the standard deviation of the sample population for GDP. Rather the table conveys the degree of imprecision with respect to subsequent revisions, with some view that subsequent vintages will more closely represent the “actual” GDP.

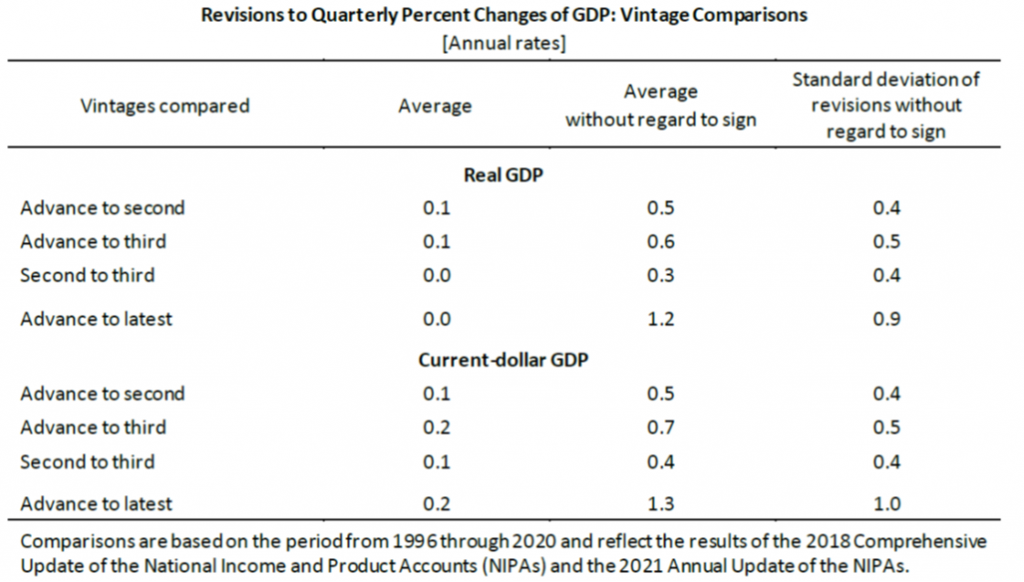

A more extensive table is in this document (“Comparisons of Revisions to GDP,” Sept. 2021) dedicated to tabulating the magnitudes of revisions.

Source: BEA (2021).

In a Survey of Current Business article (Dennis J. Fixler, Eva de Francisco, and Danit Kanal, “The Revisions to Gross Domestic Product, Gross Domestic Income, and Their Major Components,” January 2021), comparable statistics for GDP, GDP components, GDI, and GDI components, are provided.

But it’s important to remember that these measures of spread are not “standard errors” in the conventional sense (at least not to me!).

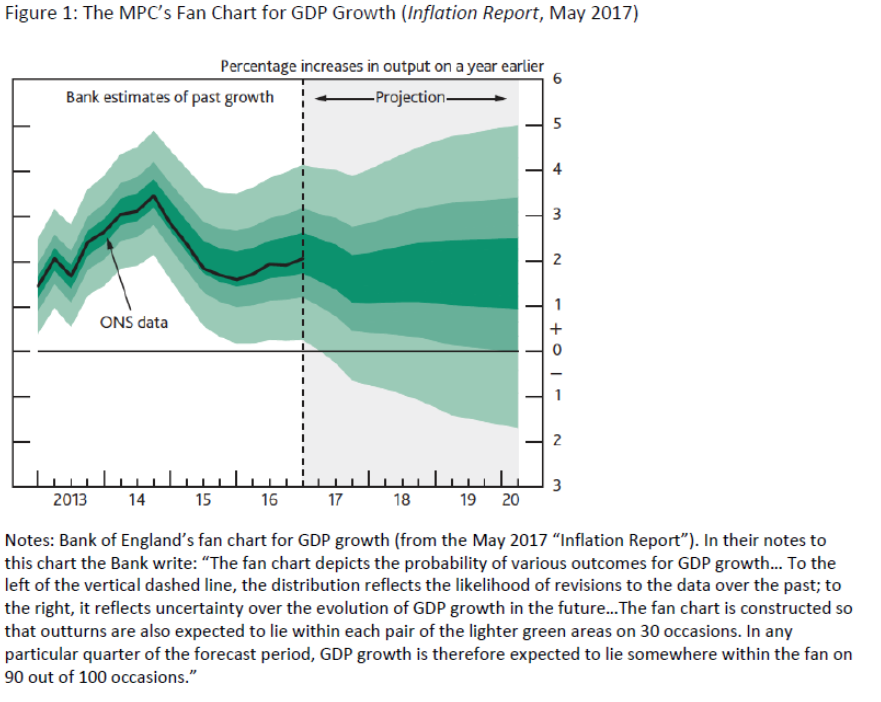

In his extensive discussion of how government statistics could be reported conveying more strongly the uncertainty surrounding estimates, Manski (JEL, 2015) cites the Bank of England’s uncertainty measures (“fan charts”) for UK GDP.

Galvao and Mitchell (2019) provide this example:

Source: Galvao and Mitchell (2019).

Suppose one really wanted to comprehensively address the issue of imprecision. What would it take? Manski (JEL, 2015) writes

Considering the sources and implications of error from the perspective of users of economic statistics, rather than the perspective of statisticians, I think it essential to distinguish errors in measurement of well-defined concepts from uncertainty about the concepts that should be measured. I also think it useful to distinguish errors that diminish with time from ones that persist. To highlight these distinctions, I will separately discuss transitory statistical uncertainty , permanent statistical uncertainty , and conceptual uncertainty.

Data revisions falls under the first category. That leaves lots of other issues unaddressed. For permanent statistical uncertainty, Manski highlights survey nonresponse and imputations. For conceptual uncertainty, he examines the impact of seasonal adjustment. These latter are thorny problems, and providing a straightforward measurement of (as reader rsm wants) total — not just transitory — statistical uncertainty would be very difficult.

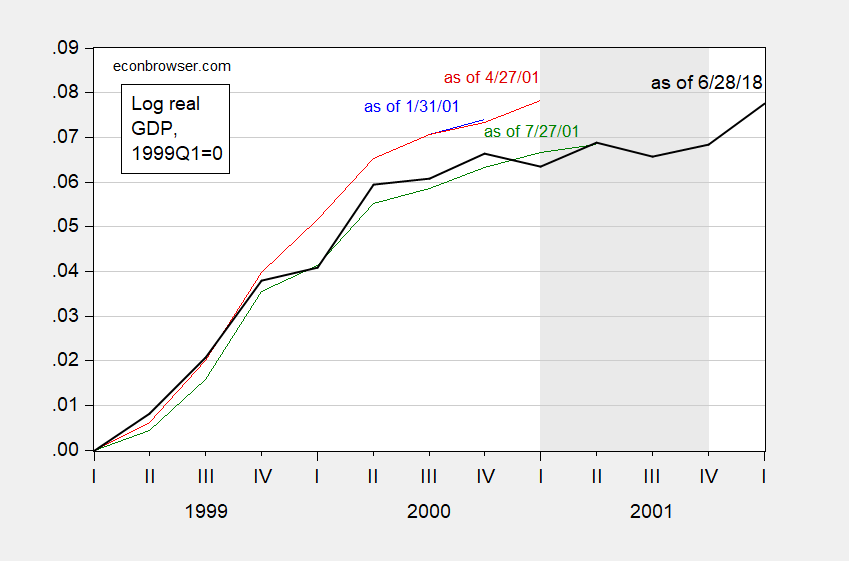

Returning to transitory uncertainty (specifically revisions), I reprise these graphs (from this post):

Figure 1: Real GDP normalized to 1999Q1 as of 1/31/2001 (blue), as of 4/27/2001 (red), as of 7/27/2001 (green), as of 6/28/2018 (black). NBER defined recession dates shaded gray. Source: ALFRED, BEA, NBER, and author’s calculations.

Figure 2: Quarter-on-quarter annualized growth rates of real GDP as of 1/31/2001 (blue), as of 4/27/2001 (red), as of 7/27/2001 (green), as of 6/28/2018 (black). NBER defined recession dates shaded gray. Source: ALFRED, BEA, NBER, and author’s calculations.

Discussion of revisions on Econbrowser (abbreviated list): [1], [2], [3], [4], [5], [6], [7], [8], [9], [10], [11], [12].

Addendum, 11am Pacific:

For information on over-the-month revisions for establishment survey NFP, see here.

On benchmark revisions to the establishment survey numbers, see here.

The Regional Comprehensive Economic Partnership is a free trade association that includes China, Australia, South Korea, Japan and several other nations in East Asia:

https://www.bbc.com/news/business-54899254

Has there been any economic analysis of the possible effects from this free trade zone?

If past prognoses of the effects of United States’ trade agreements is any indication, labor will assuredly be the big winner…and the true beneficiaries (the special interests behind the deal) will remain lurking in the shadows.

Let’s hope Asians are a little more honest with their general public.

I was hoping for an informed discussion of this trade pact. Instead we got your usual uninformed chirping. BTW troll – try using that advance tool called Google and find the Congressional Research Service analysis. It is actually useful reading.

pgl: See Petri and Plummer (2020).

Thanks as this is even better than what little CRS wrote. Let me focus on one part of the abstract:

“The new accords are moving forward without the United States and India, once seen as critical partners in the CPTPP and RCEP, respectively. Using a computable general equilibrium model, we show that the agreements will raise global national incomes in 2030 by an annual $147 billion and $186 billion, respectively. They will yield especially large benefits for China, Japan, and South Korea and losses for the United States and India. These effects are simulated both in a business-as-before-Trump environment and in the context of a sustained US-China trade war. The effects were simulated before the COVID-19 shock but seem increasingly likely in the wake of the pandemic. Compared with business as before, the trade war generates large global losses rising to $301 billion annually by 2030. The new agreements offset

the effects of the trade war globally, but not for the United States and China.

The trade war makes RCEP especially valuable because it strengthens East Asian interdependence, raising trade among members by $428 billion and reducing trade among nonmembers by $48 billion.

As I read the entire report, I’d be curious if the authors harken back to Viner’s trade creation and trade diversion concepts!

As expected, “ RCEP will not have chapters on labor, the environment, or state- owned enterprises.” That pretty well indicates who the beneficiaries are NOT…though chapters on labor and the environment in other trade agreements were just window dressing, never intended to be enforced.

It seems JohnH is chirping about a single sentence in their discussion. He could have informed us that this sentence is from page 6 under the heading:

WHAT THE EAST ASIAN TRADE AGREEMENTS WILL DO

I’m surprised JohnH is not chirping that Paul Krugman failed to write on these topics in his latest NYTimes piece.

Hilarious!!! Just the other day , pgl took a single sentence buried at the end of a paragraph deep within a piece to “prove” that economists talk all the time about making carbon taxes popular by refunding the proceeds to people hardest hit by the tax.

Now he complains that I spotted a single sentence deep within an analysis that shows that RCEP has nothing to do with the improving the lot of ordinary workers. (Some of the nations involved have a GINI coefficient almost as bad as the US or even worse, so this is not surprising—- oligopolists doing trade deals with oligopolists.)

It would be nice if a regular NY Times pundit made an effort to point out the true beneficiaries of corporate friendly trade agreements, not imaginary ones.

JohnH: Just because some corporate interests benefit from a trade agreement doesn’t mean that workers or consumers won’t benefit. But I suspect you’re not a fan of CGE’s.

@ Menzie

Regarding CGEs, don’t you think it’s awfully strange that around the time of 2015–2016 when Hillary Clinton goes around the Midwest trying to tell people she “co-authored” NAFTA with her husband and she’s on board TPP the awkward silence and/or bitter laughter is indicative of ANYTHING?!?!?!. Where are these great stories of workers who benefitted from NAFTA?? Do you think Hillary could just have found maybe FIVE workers she could have taken with her on the campaign trail to exhibit and play “show and tell” with. “Here is worker Ned Flanders, see how much he benefitted from NAFTA!!!!” Strange how the NAFTA and TPP benefactors can only be found in academics’ matrices. Strange indeed.

Your magical CGEs remind me of James Carville’s comments with Judy Woodruff on “Woke” culture. Democrats keep thinking if they drone on to eternity about it, it’s going to win the rural voters over. How exactly is that working in Virginia??

Moses Herzog: It has always been hard to sell to people the prospective but uncertain benefits of some measure, in the face of concrete and immediate costs. I’d say that is an iron rule of policy implementation.

If you think CGE’s are magical (and I agree they are a little, just like an optimization routine for logistics), then you think DSGEs are magical; just like you think a hundred equation macroeconometric model like the Fed’s FRBUS model is too…

……. Keep in mind Menzie, before being too harsh on Carville’s reality check, LSU grad Carville understands the American rural voter better than 98% of the pundits out there. There’s a good chance Bill Clinton never would have won in 1992 without Carville’s political consultancy, which would probably have made a difference on minority economists being invited on the CEA at that time if a Republican had won in 1992.

That’s not a criticism of anyone, quite the opposite. It’s a statement of fact.

@ Menzie

Well, I’ll admit I still prefer IS-LM to DSGE. I’m probably indicting myself there on certain things because that’s not a surprise coming from someone (me) who is somewhat math challenged. I’m not saying “junk DSGEs”, but I am saying that I still think IS-LM framework tells us much more than DSGEs do in the year 2021 (that could change). And I’m not saying CGEs “should be trashed” either.

I’m not anti-trade and I’m trying not to be “too pointed” about this. My main argument is that if NAFTA and TPP were what they are billed/advertised as, we would see more evidence of this than just producer costs and or lower consumer prices. What is that weighed against lost employment?? I’ll fully confess my gauge on how lost employment weighs against lower producer costs and lower consumer prices is not as good as yours (no sarcasm). But I’ll still hold it’s not what it was sold as. If it’s a production costs thing then politicians should say “There’s a trade off here, lower prices at Wal Mart but many of you will lose your jobs”. Don’t tell me/ Joe Q Public that “This means thousands of news jobs!!!” when it doesn’t, and some credentialed economists (not you I don’t think) were selling those “new jobs!!!” lies together with the politicians.

Let’s hope Asians are a little more honest with their general public.

[ Infrastructure development and free trade spreading in the wake allowed the ending of severe poverty for millions and millions of Chinese. Infrastructure development in Laos and free trade with China promises endless opportunity through Laos. China has just completed a high-speed railroad through what was land-locked Laos. Laos is now to be land-linked and have infrastructure assisted access to trade with a billion Chinese. Vietnam has just begun to transport freight on rail lines through China…. ]

Excuse me but what does this have to do with the trade pact under discussion?

RCEP = Brunei, Cambodia, Indonesia, Laos, Malaysia, Myanmar, the Philippines, Singapore, Thailand and Vietnam – plus Australia, China, Japan, New Zealand and South Korea

ASEAN = Brunei, Cambodia, Indonesia, Laos, Malaysia, Myanmar, the Philippines, Singapore, Thailand and Vietnam

China is the prime trade partner of the RCEP and ASEAN countries and has been steadily working on developing infrastructure in and increasing trade with the groups of countries. The effects have been to steadily contribute to mutual general and productivity growth in the combinations of countries. Labor has only benefited; the more trade the more the benefits.

Thanks for the clarification!

https://fred.stlouisfed.org/graph/?g=FwYX

August 4, 2014

Real per capita Gross Domestic Product for China, Thailand, Vietnam, Cambodia and Laos, 1995-2020

(Percent change)

https://fred.stlouisfed.org/graph/?g=FwZ1

August 4, 2014

Real per capita Gross Domestic Product for China, Thailand, Vietnam, Cambodia and Laos, 1995-2020

(Indexed to 1995)

http://www.news.cn/english/2021-10/19/c_1310254747.htm

October 19, 2021

China-Laos railway could be game changer for development of Laos: businesswoman

http://www.news.cn/english/2021-11/04/c_1310290620.htm

November 4, 2021

China’s import expo brings world towards brighter future

From pursuing creative ways to grow foreign trade, to improving its business environment and seeking deeper bilateral, multilateral and regional cooperation, China has walked the talk in sharing its development opportunities and building an open global economy.

“RCEP will not have chapters on labor, the environment, or state-owned enterprises.” That pretty well indicates who the beneficiaries are NOT…

[ This is incorrect, in that the countries involved in the trade partnership lean relatively strongly to social-democracy and environmental protections. ]

The good news is that we mostly are concerned about the change (delta) not the precision of the absolute number. As long as the errors are systematic and pull in the same direction, they are not of any real consequence.

We also all know that there will be revisions to early estimates. So knowledgable people will avoid critical decisions based on first estimates. The same thing goes for predictions. The science of economics is not as solid as e.g., biomedical science. But that is well known and good economists as well as “users” of economic data understand those weaknesses. The first thing I do when I hear a prediction from an economist is to ask how good has that person been with their previous predictions, and have they been able to recognize and learn from previous failed predictions. That is why I listen to Krugman and tune out Larry Summers.

Absolutely.

Nothing [with tens or hundreds of millions of inputs] is perfect/precise.

The important things are assurances of comparability, levels, trends, etc.

There are other ‘issues’ about which I will not ‘squawk.’ Not my business.

“I don’t even know what it means to think of a standard error that represents the standard deviation of the sample population for GDP.”

It means rsm is trying to seem smart, pretending to have knowledge in an area where he or she has none.

What it also means is that rsm doesn’t care that he or she is writing nonsense. The nonsense of asking for standard errors or error bars where no such thing can be calculated has been pointed out to rsm repeatedly, but rsm keeps pretending to be making a serious critique. Someone interested in the subject at hand would have looked into the circumstances in which standard errors can be calculated. rsm just doubled down on being wrong.

I believe it was baffling who suggested that rsm is merely trying to interfere with useful discussion – another troll along thexsame lines as climate-change deniers. ltr smears Chinese propaganda on the screan, CoRev makes up nonsense about Democrats and rsm throws error-bar tantrums. All the same stuff.

as pauli is credited with insulting a fellow physicist, “he’s not even wrong”.

Yep. I love that quote, and am reminded of it often by our local trolls.

unfortunately most of the targets do not even understand what it means.

https://www.politico.com/news/magazine/2021/11/04/vaccine-manufacturers-are-profiteering-history-shows-how-to-stop-them-519504

This discussion of the high profits being received by the providers of COVID-19 vaccines raises policy issues which will surely draw some heat from Big Pharma.

Action Aid is all over the financials for Moderna and Pfizer:

https://actionaid.org/news/2021/pharmaceutical-companies-reaping-immoral-profits-covid-vaccines-yet-paying-low-tax-rates

Let’s back up on this claim that the price is 24 times the cost of production. Moderna’s 10-Q is reporting cost of production = 15% of revenues. But its selling costs are only 4% of revenues so insanely high profits are being generated.

Action Aid is suggesting that Moderna’s income tax bite is a mere 7% of profits. How could it be so low? Oh wait – Trump’s tax cut for the rich not only lowered the tax rate to 21% but something called FDII gave intangible profits a huge discount. We should at least repeal those part of that stupid 2017 tax deform!

Not to be hard on Action Aid which tries hard but a little clarification on what they said about Moderna evading taxes. I checked their 10-Q and found this:

“Our effective tax rate for the three and nine months ended September 30, 2021 was 6% and 7%, respectively, and was lower than the U.S. statutory rate primarily due to the benefit related to the release of the valuation allowance on the majority of our tax attributes and other deferred tax assets, the benefit of the foreign derived intangible income deduction, as well as a discrete item for excess tax benefits related to stock-based compensation. Our effective tax rate for the three and nine months ended September 30, 2020 was lower than the U.S. statutory rate primarily due to the valuation allowance.”

See their 7% is correct but the interpretation is off. Now my complaint about Trump’s FDII is borne out here but the main reason this calculation is so low is that their past losses are partially offsetting their current income.

Left wing organizations like Action Aid serve a purpose but they need to do a little fact checking at times.

1) Economists lack real world exposure.

2) They rely on last period statistics, which are usually a lot more imprecise than they acknowledge.

3) They are also ideological and can torture statistics to get their desired outcome.

4) That’s how we get studies that say “Enhanced Unemployment Benefits have no impact on employment” or “Higher Minimum wage does not result in lower employment” or “Unskilled Illegal Immigrants do not depress unskilled wages” or “Higher Tax Rates do not lower investment”

sammy: 1) I wonder how many economists you know – after all we all live on the same globe you do, have to pay bills, taxes (well, I’m assuming you actually pay your taxes). 2) I just provided a whole post on what stats BEA reports on GDP/GDI regarding revisions. I bet I have a lot better idea about data imputations than you do. Look at CEA blog, and note how many times they warn not to rely on one data point for one series (e.g., the October NFP). In contrast, scanning through your comments, I’ve not seen one citation of a standard error. 3) Please point out what data I’ve tortured to get a point across on this blog; on the other hand, when I look at your comments… — so are economists any more likely to “torture” data (or for you, anecdotes) than any other person? 4) I see raising minimum wage decreases employment is a matter of faith, rather than empirics, for you.

“In contrast, scanning through your comments, I’ve not seen one citation of a standard error.”

In Sammy’s case, it is nothing more than one massive error after another.

“They are also ideological and can torture statistics to get their desired outcome”. Pot calling the kettle black. Your only real world experience comes in the form of directives from Kelly Anne Alternative Facts Conway.

)1 sammy engages in argument by assertion.

B) sammy never offers evidence because he doesn’t have any regard for facts.

&/ sammy isn’t smart enough to use upper case letters.

)( sammy repeats standard, baseless accusations because he lacks the ability to think up original baseless accusations

🙂 I’m running out of ridiculous ways to misuse parentheses way before I’ve exhausted the list of ways in which sammy is a drag on average intelligence among chordates.

Pfizer is developing a pill form anti-viral treatment that may help those unfortunate to catch COVID-19:

https://www.nytimes.com/2021/11/05/health/pfizer-covid-pill.html

Sorry rsm but their research has not put standard deviations around the research yet.

1) Economists have limited exposure to the real world

2) Economists extrapolate off last period statistics, which are much more imprecise than they acknowledge

3) Economists are ideological and can torture statistics to reach the desired outcome

This is why we get studies like: “Enhanced Unemployment Benefits do not affect Employment” or “Higher Minimum Wage does not reduce Employment” or, Unskilled Immigration does not Affect Wages” or, my favorite, “Increased Taxes do not Reduce Investment”

sammy: On the other hand, there are howlers like this comment by you:

That was nearly a year after we saw a big discrete drop in US soybean prices relative to Brazilian and Argentine…

“That was nearly a year after we saw a big discrete drop in US soybean prices relative to Brazilian and Argentine”

To use his own phrase – Sammy was relying on last period’s statistic

I guess your derision should also be directed to Barkley:

Barkley Rosser

June 21, 2019 at 9:01 pm

I (also) think Steven is largely right, and I think Menzie is not in great disagreement.

I wouldn’t be in a hurry to use Barkley Junior quotes as a personal defense. Barkley Junior tells lies about people here all the time. I can say unequivocally I never once on this blog labeled criticism of CoRev as a witch hunt.

https://econbrowser.com/archives/2019/06/winning-soybeans-front#comment-226606

Yet a “professor” loves to run around this blog telling patent lies. I would say even pgl would attest to that fact, if she also hadn’t told so many lies on this blog:

Moses,

I know it looks silly to deny lying when one is being accused of lying “here all the time.” But, sorry, in fact I have never consciously lied here. I have made mistakes, and when those are pointed out, I apologize. So if I mistakenly accused you of labeling criticism of CoRev a “witch hunt,” I apologize. I don’t know.

I do find it odd that in the long link you provided, when I made that statement, you did not contradict me at the time. I could not find you saying “I never said that.” Did you? And if you did not, why not? Why wait over two years to come charging me with lying, indeed with lying “here all the time”?

It is rather odd that a large chunk of that link is CoRev accusing me of lying about my father’s contributions to the US space program, which were all true. CoRev did what he always does, when caught lying he moves the goal posts and introduces a fresh lie. This link is an outstanding example of this, with him providing contradictory accounts of his various supposed medals. Menzie totally sided with me and challenged him to put up or shut up on some of his claims. But he never did.

Needless to say, this was quite a spectacle of a link.

So, again, if indeed I made an inaccurate claim about you and witch hunts of CoRev, I apologize. But I again note that it looks like this is the first time you have ever made this claim. You did not do so at the time in this link you provided.

BTW, Moses, I note that in this link where you note me saying you referred to a “witch hunt” regarding CoRev, very shortly after that remark you declared that CoRev was actually born from the belly of the MAGA movement. You made no specific comment about my witch hunt remark.

I do not know where I got that from or whether you said it or not. Not going to go digging around for the origin. But I suspect that one of two things happened, as indeed I do not consciously lie. Maybe someone else wrote it and I misread your name as the one who did it. I have made that mistake before, misreading somebody’s name, shameful I know. Or else maybe you said something sort of like that but not using that term, and I substituted it for what you did say. Whatever, but it seems pretty strange that you made such a fuss about it now.

WTF? I would ask if you have lost your mind but that was settled a long time ago.

Man, you’re obsessed by/with soybeans.

CoRev: No, I’m obsessed with willfully ignorant people stating with complete certitude things that are patently wrong. You are poster child for that type of person. That’s why your statements keep on showing up in the year-in-review compendia of outrageously incorrect statements.

By the way, should you ever admit you were wrong about (1) the use of futures as predictors, and (2) the fact that 2-plus years seems to be a bit long to be defined as a “blip”, then I’ll stop talking about your statement.

Menzie, I often do a search on CoRev to determine determine how deeply and or frequently I am living in your minds. It is always illuminating. 😉

CoRev: You’re in my mind because you are a canonical example of someone who is supremely ignorant, yet never admits his/her own failure to understand or have learned. In other parlance, you constitute a target rich environment.

Menzie, I’ve moved on past soybeans, but you, clearly obsessed, continue to bring them up. Strangely and apparently, you use the soybean subject instead of a rebuttal to a non-soybean comment.

CoRev: Well, if you’ve truly moved beyond soybeans, why don’t you fess up and admit you were wrong?

And what about the time you kept on asking me to provide the “raw data” rather than the data from FRED, when the two were the same?

Or your assertion that the Baker-Bloom-Davis EPU index is “left biased”?

Or your 2007 assertion that the budget balance was actually improving?

Or…

Menzie, more illumination. Thanks! 😉

Let’s talk about the real issues. All through the Trump administration we were told by you and your followers that the Dem policies were far superior than Trump’s. Most arguments were based upon some sub-category of a major economic metricy. You recently mentioned the trade war with China due to Trump’s tariffs, and yet the tariffs are still in place. Other major metrics are also performing worse than under Trump.

This is emphasized by the tenor of the articles. Recent articles center around: “it’s not as bad as we see/feel/think” while in the 1st year of Trump’s administration the articles were just the opposite “it’s worse than we see/feel/think”.

We warned you before the election that Biden would be disaster. We warned you and highlighted Biden’s disasters after the election. And now, the electorate has warned you that many of your preferred policies are intolerable.

Unless Dems change policy biggly, 2022 will be another election blood bath, as we warned the 2021 elections appeared to be.

CoRev: First, I don’t think you are using the word “metricsy” in the correct way. You should look up the definition.

Second, I’ve written that we should remove the tariffs, particularly the Section 232 tariffs which were levied without valid justification, and arguably exacerbates inflationary pressures. In other words, we would be better off if those tariffs were off.

It’s a disaster under Biden? Please compare employment now vs. under Trump. Please compare Trump era average growth of 1.4% vs 4.8% under Biden so far.

Its tiresome to listen to the maga crowd moan about how poorly biden is doing, considering everything in the country right now is outperforming the trump administration. EVERYTHING.

Menzie, you compound my typo, metricy with your own metricsy. Mine was supposed to be metric. I won’t guess what you were trying to say.

Why did you miss another opportunity to reference soybeans while mostly ignoring my points?

CoRev: You haven’t addressed a single point I asked.

You of all people have to be kidding me. You and Sammy spewed enough intellectual garbage on this issue to feed the planet.

“Man, you’re obsessed by/with soybeans.”

corev, would you rather we just make up some alternative facts and anoint you as correct?

YOu do it all the time!

Menzie ,we can all make good guesses on what things sammie regularly watches on TV (I’m doubtful he reads too much, QAnon message boards maybe just so his kids aren’t to fast to pass him in reading comprehension??), but if for nothing other than laughs, wouldn’t you pay a nickel to know how sammy sources his news?? I said a nickel now. You know, like tossing out a couple coins for the novelty carnival act. I mean wouldn’t you, for laughs??

*too fast

You edited your earlier stupid rant? This version happens to be even dumber than the previous one.

“Increased Taxes do not Reduce Investment”

I asked you to read that 1992 Economic Letters paper on the capital gains tax. I guess the fact that it was 4 pages long meant it was too much for your feeble little brain!

Hey Sammy – the City of West Hollywood just raised its minimum wage to $17.64 an hour. Something tells me that the end of the world will not occur but if you have a contrary analysis that it will end business as we know it – submit the paper to the Journal of Labor Economics. The editor will surely have a chuckle!

sammy,

You are stupidly repeating stupid arguments. What makes you think that “Economists have limited exposure to the real world”? Do you think that we actually live in ivory towers and never set foot outside of them or never go to grocery stores or buy gasoline or get sick and go to the hospital? Just what on earth do you mean by this ridiculous statement?

Oh, I know some economists have done studiers that come to conclusions that you do not like for your own pet ideological reasons. Is that it? But what makes you think you are right and they are wrong? Have you done any of those studies yourself? Something tells me the answer is no, you are just reciting ideological gospel.

Life is too short. I’ll leave it to you to note Sammy’s incessant stupidity as well as that worthless wino who wants to run around crying liar, liar pants on fire.

Huh??? I prefer the label “Connoisseur of extremely cheap adult beverages”. I’m looking for a commercial sponsor. I see myself as more of a Orson Welles type endorser than a Sammy Hagar type endorser. I could take on commercial directing duties as well, and I can write AV scripts.

Imagine me in a suit, a bit rotund (a pillow under my blazer for the true Orson Welles affect) holding up a full wine glass.

“We will drink Beringer Wine, [ dramatic pause ] …..until we’re fully sublime”

Moses,

We got it. You want to be the first customer when macroduck opens his planned Error Bars,:-).

I used to go to bars a lot. But I am now the George Thorogood “I drink alone” guy. For reasons of both personal safety and cost. But, yeah if macroduck lived nearby I would try his bar in the name of camaraderie. Unfortunately for me, I think macroduck is way too sharp a man to be a resident of the redneck backwards state I currently reside in.

I may start a new habit though, in my backyard, I have what David Lee Roth calls a “One Piece Thermo-Molded Country Plastic Chair” that I got for $20 about 2 years ago. I am thinking of making a new habit of drinking in that chair in the fresh/brisk autumn air (that I love) the cooler months (I hate summer weather). What has made me hesitant to do this is I have what I strongly suspect to be a MAGA pack of neighbors at about 2:30 direction as I am sitting in my backyard, and I am scared with the fear of God, they will take my sitting there as an “invitation” for conversation and I will be awkwardly “forced” to talk with them. Me in the “red light” portion of my drinking, (hell maybe even the “yellow light”) with MAGA illiterates is an uhm, …….”combustible” recipe for major problems.

The positive part of this new operational environment would be Professor Chinn would receive less garbage from me while drinking, so that’s one huge upshot.

“Economists have limited exposure to the real world”

unlike the millions of small business owners who went out of business through the years. sure the academy is filled with ivory tower elitists who know nothing about the real world. and the real world is full of brilliant business people who know everything about economics. not. for every successful businessman, there are ALOT of failures in the real world, sammy. you and your restaurant business were one of them.

baffling,

Yes, well my restaurant didn’t work out. But I had some great experiences, and learned a lot. Isn’t that what life is all about?

I’m sure your customers loved the beer and chicken wings being served by your Hooter girls!

Sammy, my guess is you did not learn a damn thing from your failure. Based upon the continued stooopidity you bring to this blog on a daily basis. Yes i know that was a personal insult and mean. But its the truth and i am not in the mood to sugarcoat it.

When I live on the Uppity East Side my favorite bar had its share of right wing business people who used to whine “Obama never made payroll” and other garbage like that. Of course Obama had the biggest payroll on the planet and one of these business people was a doctor whose single employee ran his damn business. But of course this doctor knew more about economics than the rest of us. Sort of like Dr. Rand Paul I guess!

No problem, Baffling. Yours is just a typical response of progressives. I expect it.

http://www.news.cn/english/2021-11/04/c_1310289485.htm

November 4, 2021

RCEP to take effect on Jan. 1, 2022

BEIJING — The Regional Comprehensive Economic Partnership (RCEP) agreement will take effect on Jan. 1, 2022, according to the Ministry of Commerce.

As of Nov. 2, the ASEAN Secretariat * has received instruments of ratification/acceptance (IOR/A) from six ASEAN member states — Brunei, Cambodia, Laos, Singapore, Thailand and Vietnam — as well as from four non-ASEAN signatory states — China, Japan, New Zealand and Australia.

As provided by the agreement, the RCEP will enter into force 60 days after the date at which the minimum number of IOR/A is achieved. This means that the RCEP agreement shall enter into force on Jan. 1, 2022.

Signed on Nov. 15, 2020, the 15-member RCEP is the world’s largest free trade agreement covering about 30 percent of the world’s population. Its economic and trade volume also accounts for 30 percent of the world’s total.

The entry into force of the agreement is of great significance for further promoting intra-regional free trade, stabilizing industrial and supply chains, and promoting China’s high-level opening-up, the ministry said.

* Brunei, Cambodia, Indonesia, Laos, Malaysia, Myanmar, the Philippines, Singapore, Thailand and Vietnam

Menzie has provided a link to a very interesting analysis of this trade pact.

BLS released its October Employment report:

https://www.bls.gov/news.release/empsit.nr0.htm

Payroll survey says employment up 531 thousand.

Household survey say employment to population ratio up to 58.8%.

We will leave it to rsm to calculate his standard errors!

Peloton’s sales fell below expectations and they had a larger than expected operating loss – which caused its stock price to fall:

https://www.cnbc.com/2021/11/04/peloton-pton-to-report-fiscal-q1-2022-earnings-.html?__source=sharebar|twitter&par=sharebar

Hey when your business model relies on charging people a fortune for indoor biking during a pandemic, people getting vaccinated and able to go back to the gym can be a real bummer.

Given the fact that soybeans are a fungible product, and ——- corruption is rampant…

[ The assertion is false and prejudiced. ]

Consider the author of that comment. Every thing he writes is false.

Speaking of revisions, for new jobs:

August (“OMG! only 235K”) is now 438K in third revision.

September (“we’re entering recession only 194K”) is now 312K in second revision.

October first estimate is 531K.

Doesn’t look like a recession to me — but that opinion could be revised!

https://fred.stlouisfed.org/graph/?g=Hy9b

January 4, 2018

United States Employment-Population Ratios for Men and Women, * 2017-2021

* Employment age 16 and over

https://fred.stlouisfed.org/graph/?g=sxNb

January 4, 2018

Employment-Population Ratios for White, Black and Hispanic, * 2017-2021

* Employment age 16 and over

In other news, wages are up, 5.4% YoY for production workers and 12.4% YoY for leisure and hospitality (restaurants and hotel workers).

This is great news for low wage workers but I’m sure CEOs are complaining that their million dollar bonuses aren’t keeping up with inflation for yachts.

https://fred.stlouisfed.org/graph/?g=FTIA

January 4, 2020

Average Hourly Earnings of Private Production and Nonsupervisory Workers, * 2020-2021

(Percent change)

* Production and nonsupervisory workers accounting for approximately four-fifths of the total employment on private nonfarm payrolls

https://fred.stlouisfed.org/graph/?g=FU9h

January 4, 2020

Average Hourly Earnings of All Private Workers, 2020-2021

(Percent change)

https://www.msn.com/en-us/sports/nfl/nfl-world-reacts-to-aaron-rodgers-comments-on-the-pat-mcafee-show/ar-AAQmQLy?ocid=uxbndlbing

When I heard Aaron Rogers excuse for not getting vaccinated while he lied to everyone claiming he did – I jokingly claimed Tucker Carlson was his doctor. After his appearance on something called the Pat McAfee this is no longer a joke. Aaron Rogers is seriously deluded and a hazard to the health of anyone he comes in contact with. No way should anyone in the NFL take the field with this clown.

We know Faux News lies about inflation but now CNN is giving them some real competition with the Dallas cow whining about the cost of a gallon of milk:

https://jabberwocking.com/why-does-cnn-lie-about-inflation/

At times Kevin Drum actually does some real research!

Menzie,

“The wise man is one who, knows, what he does not know.”

-Lao Tsu